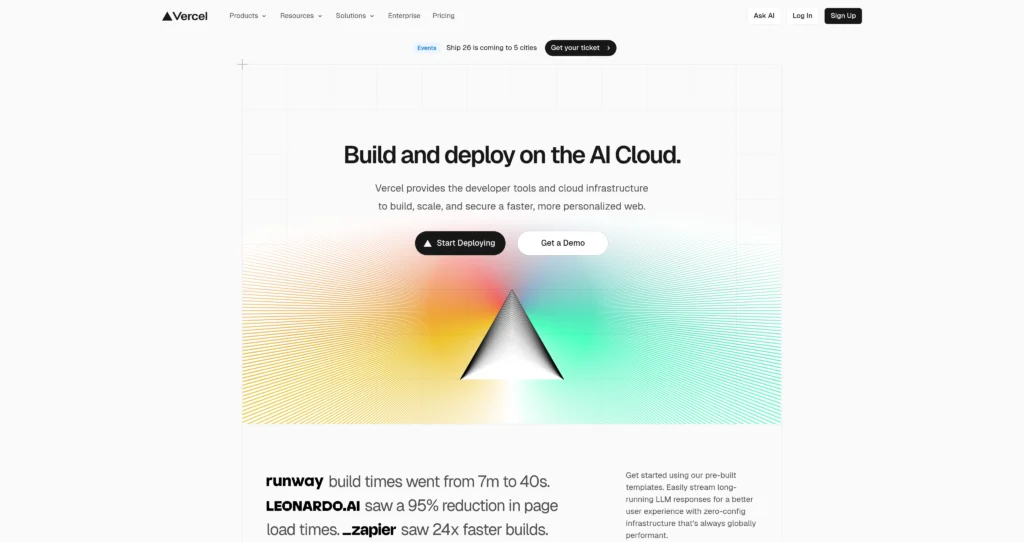

About Vercel

Vercel operates as a cloud platform tailored for frontend developers, specializing in static site hosting and serverless functions. Designed primarily around the React ecosystem, it serves as the creator and native host for Next.js. The platform caters to engineering teams who want to ship user interfaces quickly without managing backend infrastructure. Recently, Vercel has aggressively positioned itself in the artificial intelligence space with the Vercel AI SDK, giving developers a standardized toolkit to stream LLM responses directly into their web applications.

Behind the scenes, the platform relies on a global edge network to cache content and execute code as close to the end user as possible. It integrates directly with GitHub, GitLab, and Bitbucket to automate CI/CD pipelines through simple git pushes. While major brands like Washington Post and Under Armour trust its 99.99% enterprise uptime, adopting Vercel’s ecosystem heavily does introduce a subtle form of vendor lock-in, particularly when tying your architecture to their specific serverless and edge middleware implementations.

Key Features

Vercel AI SDK : Integrates LLMs directly into React and Next.js apps with streaming UI components. Developers can build ChatGPT-like interfaces in minutes rather than days.

Global Edge Network : Caches static assets and executes functions across an anycast network spanning multiple regions. This drastically reduces time-to-first-byte (TTFB) latency for global users.

Preview Deployments : Generates unique, shareable URLs for every git push or pull request automatically. Engineering teams can gather stakeholder feedback on live staging environments before merging code.

Serverless Functions : Executes backend logic on demand without provisioning or managing server infrastructure. Compute costs remain strictly tied to your actual application traffic.

Next.js Native Optimization : Automatically configures Image Optimization, Middleware, and React Server Components out of the box. You get the maximum possible performance from the framework without fighting webpack configurations.

Edge Middleware : Runs lightweight JavaScript functions before a request completes, enabling fast personalization or authentication. This protects sensitive routes or serves localized content with virtually zero latency penalty.

Web Analytics : Ingests real-time traffic and Core Web Vitals data directly from the edge network. This helps engineering teams pinpoint exactly which pages are causing performance bottlenecks without injecting heavy third-party tracking scripts.

v0 Generative UI : Translates text prompts directly into copy-and-paste React components using artificial intelligence. This significantly speeds up the initial prototyping phase for frontend developers building complex dashboards.

Pros

✔ Zero-configuration deployments for major frameworks like Next.js, Nuxt, and SvelteKit.

✔ Vercel AI SDK dramatically simplifies streaming responses from OpenAI, Anthropic, and Google.

✔ Preview URLs for every commit accelerate QA and stakeholder feedback loops.

✔ Global edge network ensures exceptionally fast page load speeds out of the box.

✔ Generous free tier accommodates most personal projects, portfolios, and hackathons.

✔ First-party Web Analytics provide privacy-friendly insights without third-party cookies.

✔ Automatic infrastructure scaling handles sudden traffic spikes without manual intervention.

Cons

✖ Bandwidth and serverless execution overages on the Pro plan get expensive quickly for high-traffic sites.

✖ Heavy reliance on Next.js creates a real vendor lock-in effect for your architecture.

✖ Serverless cold starts can still occasionally impact latency on rarely accessed API endpoints.

✖ Background jobs and long-running tasks require external workarounds due to strict execution timeouts.

✖ The leap from Pro to Enterprise pricing is notoriously steep for mid-sized startups.

✖ Edge Middleware restrictions limit the size and execution time of your functions.

✖ Vercel is not a native database host, requiring you to rely on third-party providers for heavy data storage.

Plans & Pricing

| Plan | Type | Price | Usage Limit | Inclusions |

|---|---|---|---|---|

| Hobby | Free | $0/month | Personal / Non-commercial | 100GB bandwidth, 1,000,000 Edge Requests, 100GB-hours Serverless Execution, Community Support, Deploy from CLI or personal git integrations. |

| Pro | Per User | $20/user/month | Commercial / Small Teams | 1TB bandwidth, 10,000,000 Edge Requests, 1,000GB-hours Serverless Execution, Email Support, Password Protection, Advanced Deployment Protection, 1 Concurrent Build per user. |

| Enterprise | Custom | Contact Sales | Mission-critical / Large Teams | Custom bandwidth and limits, SSO/SAML, 99.99% SLA, Isolated Infrastructure, Dedicated Success Manager, SOC2/HIPAA compliance, Custom Caching Rules. |

FAQs

Q1: Can I host long-running AI models directly on Vercel?

No. Vercel is designed for serverless execution with strict timeouts. You must host heavy AI models on external GPU providers like Replicate or AWS, and use Vercel to handle the API routing and user interface.

Q2: Is Vercel only for Next.js applications?

While optimized heavily for Next.js, Vercel supports over 35 frontend frameworks. You can easily deploy Nuxt, SvelteKit, Astro, and standard React applications with zero configuration.

Q3: What happens if my site goes viral on the Hobby plan?

Vercel will temporarily pause your deployment if you exceed the hard limits of the free tier to prevent runaway costs. To keep the site online during a traffic spike, you will need to upgrade to the Pro plan and pay for any subsequent overages.

Q4: How does the Vercel AI SDK work with different LLM providers?

The SDK acts as a unified interface for multiple AI providers, including OpenAI, Anthropic, and Google. It standardizes the streaming of text and UI components, so you can swap AI models by changing just one line of code in your backend.

Q5: Does Vercel offer a built-in database?

Vercel offers managed Postgres, KV (Redis), and Blob storage through strategic partnerships. However, these are priced separately from the core hosting plans and are intended for lightweight use rather than enterprise-scale data warehousing.

Published on: May 11, 2026